How does police use of live facial recognition (LFR) work?

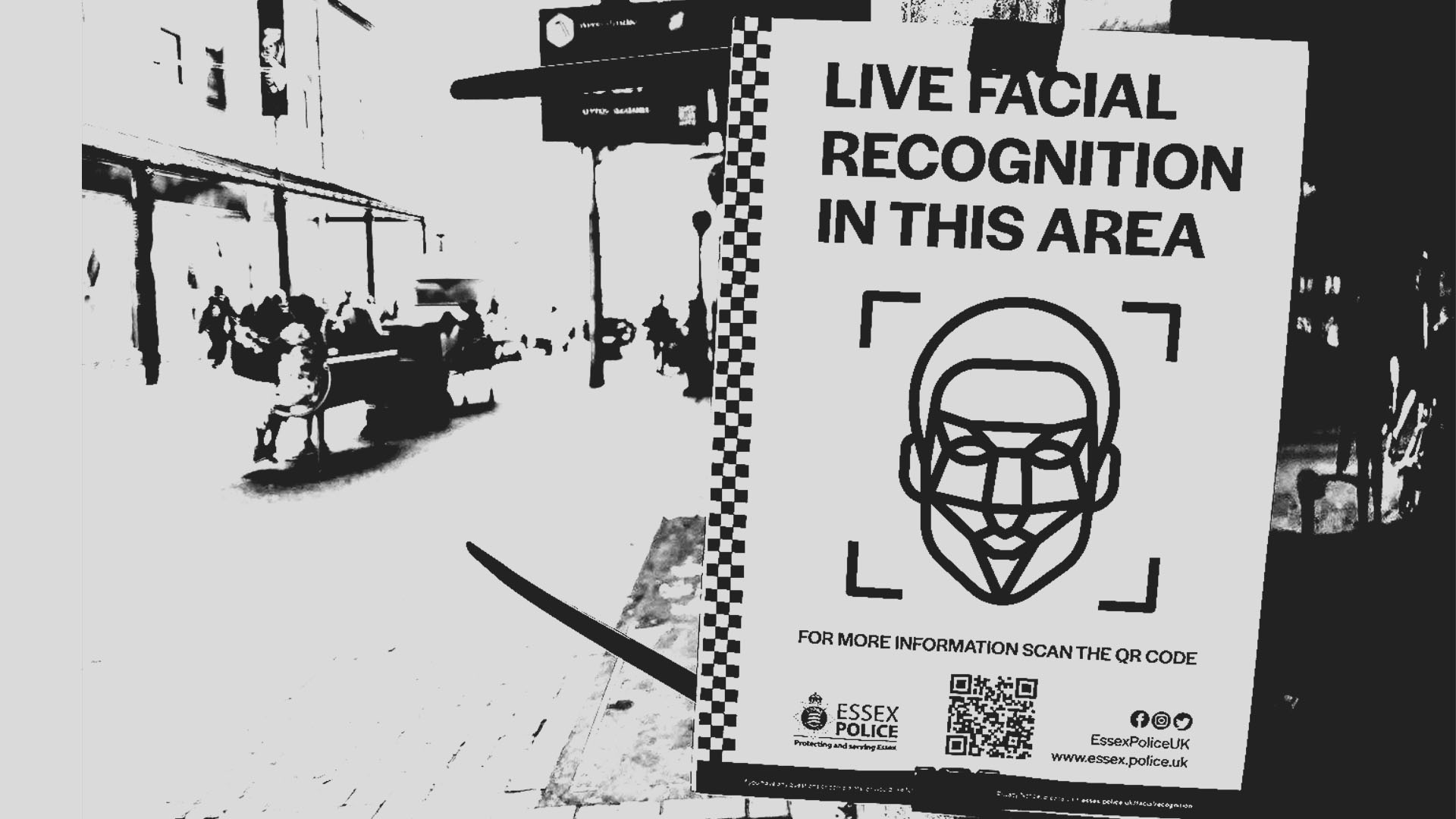

Live facial recognition (LFR) matches faces on live surveillance camera footage against a police watchlist in real time. Before a deployment, the police will prepare a watchlist, which can comprise of police-originated images, such as custody images from the police national database, and non police-originated images, for example publicly available, open source images or information shared by other public bodies.

At the deployment, a camera will capture a live video feed, from which the LFR software will detect human faces, extract the facial features and convert them into a biometric template to be compared against those held on the watchlist. The software generates a numerical similarity score to indicate how similar a captured facial image is to any face on the watchlist. Police set a threshold for these scores, and any matches above this threshold are flagged to the police, who may then decide to stop the individual.

What is the difference between live and retrospective facial recognition?

It is important to draw a distinction between live facial recognition and retrospective facial recognition. Live facial recognition uses a real-time video feed to biometrically scan the faces of members of the public almost instantaneously. Retrospective facial recognition (“RFR”) uses facial recognition software on either still images or video recordings taken in the past and compares the detected faces to photographs of known individuals held on photo databases.

Whilst RFR may have limited use as a forensic investigatory tool, subject to strict regulation, live facial recognition amounts to constant generalised surveillance. The British public would not be comfortable with fingerprint scanners on our high streets; there is no reason we should accept being subjected to a biometric police line-up either.

How have other states regulated LFR?

It is noteworthy that LFR is most enthusiastically embraced by authoritarian regimes, like Russia and China, whilst other democratic countries have taken measures to restrict its use. Several US states and cities have implemented bans and restrictions on the use of LFR and the EU has implemented the AI Act, which prohibits the use of LFR for law enforcement purposes, except in the most serious and strictly defined cases with a requirement of judicial authorisation. States must pass domestic law in order to use LFR and each use must be reported to data protection authority.

This is a far cry from the UK’s unregulated approach and lack of oversight.

Explore the full list of questions and answers here.

How does live facial recognition (LFR) work in a retail context?

Live facial recognition (LFR) matches faces on live surveillance camera footage against a watchlist in real time. Companies add individuals who they want to exclude from a store to a tailored watchlist, which generally comprises of images taken from the customers’ previous visits to a store. Facial recognition software companies also offer ‘National Watchlists’ comprised of uploads of images and reports of incidents of crime and disorder from its customers across the UK.

A camera placed at the entrance of a store will then capture a live video feed, from which the LFR software will detect human faces, extract the facial features and convert them into a biometric template to be compared against those held on the watchlist. The software generates a numerical similarity score to indicate how similar a captured facial image is to any face on the watchlist. Any matches above this pre-set threshold are flagged to shop staff, who then deal with the individual in line with the retailers’ policy.

Is LFR is necessary to catch shoplifters?

In the retail context, LFR is often touted as a solution to combat shoplifting and anti-social behaviour. Following the ICO’s investigation of Facewatch, the regulator held that in order to comply with data protection legislation and human rights law, retailers could only place individuals on a watchlist where they are serious or repeated offenders. The evidence we have collated demonstrates that, in practice, members of the public are placed on retailers watchlists for very trivial reasons, including for accusations of shoplifting valued at only £1. This shows that not only are LFR companies not complying with regulatory decisions, but also that the technology being used disproportionately as it is not just targeted at the most harmful perpetrators.

In the Justice and Home Affairs Committee Inquiry on ‘Tackling Shoplifting,’ Paul Gerrard, Public Affairs and Board Secreteriat Director at The Co-op Group, gave oral evidence that the company has no plans to implement LFR because it “cannot see what intervention it would drive helpfully.”1 Gerrard highlighted the ethical implications of employing a mass surveillance tool in a shop, as well as the heightened risk of violence and abuse to retail employees who have to confront shoppers if the LFR system flags them. His evidence reflects our position that there is no place for this invasive software from both the perspective of shoppers and retail workers.

Is shops' use of LFR just the modernisation of traditional security systems?

There is also a significant divergence between the level of intrusion associated with traditional security systems versus facial recognition surveillance. LFR is an invasive form of biometric surveillance, which is linked to a deeply personal identifying feature (i.e., an individual’s face) and is deployed in public settings, often without the consent or knowledge of the person being subjected to checks. Additionally, unlike “traditional” blacklists held by shops, which might comprise of photographs of known local offenders, LFR could flag an individual in a shop they have not previously visited, producing a far greater magnitude for surveillance.

The private use of live facial recognition creates a new zone of privatised policing. It emboldens staff members to make criminal allegations against shoppers, without an investigation or any set standard of proof, and ban them from other stores employing the software. Clearly, when errors are made, this has profound implications for the lives of those accused, with little recourse for challenging the accusations. The lack of oversight and safeguards means that vulnerable individuals, including young people and those with mental health issues, are particularly at risk of being included on watchlists and leaves the door open to discriminatory and unfair decisions with significant impacts.

Explore the full list of questions and answers here.